Why Most Compliance Systems Cannot Prove Data Integrity in Court

Article Summary

This second article in the series explores why digital records that satisfy audits can still fail as legal Evidence when challenged. It highlights the critical gap between data capture and evidential integrity, showing how systems designed for compliance often cannot prove reliability under legal scrutiny.Article Contents

Introduction

In regulated healthcare and medical device environments, there is a widely held assumption – and it is an entirely understandable one. It is this: “If a system records everything, then it can prove everything.” But it cannot.

Most organisations operate on that basis. Data is captured, Data is stored, Data is Backed Up, and Data is then made available for audit. Similarly Reports can be generated on demand and Dashboards present a reassuring picture of control and completeness.

From an operational perspective, this often works extremely well. But there is a difficulty – and it is a serious one. Making and storing data is not the same thing as proving it. In other words, ensuring it’s Admissible in a Court of Law.

And in Court, only one of those matters. And that distinction usually remains invisible… until the moment it matters most.

The Difference No One Sees – Until It Matters

When digital records are relied upon in litigation or regulatory proceedings, the question is not whether the data exists. It is far more precise and here is just one of the legal tests:

“Can you prove that this data is an accurate and unaltered representation of the event it purports to record, at the time it was created?”

That is not a technical question. It is the question that determines whether your data survives legal challenge at all – or fails before it is even considered.

Now that is a vastly different standard. It is not enough to show a record. The System that produced it must be capable of proving itself under legal scrutiny.

Courts are not concerned with how much data you hold, or how well organised it appears. They are concerned with whether the System that generated the “Evidence” can be trusted. Trusted not generally – but at the time it generated this “Evidence”.

And it is at this point that many otherwise well-designed compliance systems begin to struggle.

A Legal Reality – Quietly and Dangerously Overlooked

There is a long-standing legal principle, reflected in authorities such as R v Shepherd (see Article 1 in this series), that computer systems are generally presumed to be operating correctly.

At first glance, that sounds reassuring. But it is not. For it is only the starting point. If that presumption is challenged (and in contested proceedings it very often is) the position inevitably changes.

The litigant seeking to put their Data into Evidence (in order to assist in them proving (winning) their case) must then demonstrate that the System generating the Data in question was functioning properly at the relevant time; and that the data it produced can be relied upon.

It is at that moment that the gap appears. Not a technical gap. An evidential one. And not because the system has failed operationally. But because it was never designed to prove itself.

The Hidden Problem: Systems That Work – But Cannot Prove They Worked

Most compliance systems are built to support operations:

- to record events

- to track processes

- to satisfy audits

They are not typically designed with evidential scrutiny in mind. And that leads to a subtle but critical problem.

A System can appear to function perfectly – generating reports, passing inspections, supporting day-to-day activity; and yet still be unable to demonstrate, under examination, that it was functioning properly at the time the records were created, and that those records can be relied upon as Evidence.

The difficulty is not visible in normal use. It only emerges when someone asks a very simple question (in Court): “How do you know this record is exactly what it was at the time it was created?” Or put another way: “Has your system fixed the first writing of the Data in Time, so we know it never changed?

At that point, many organisations discover that they do not, in fact, know.

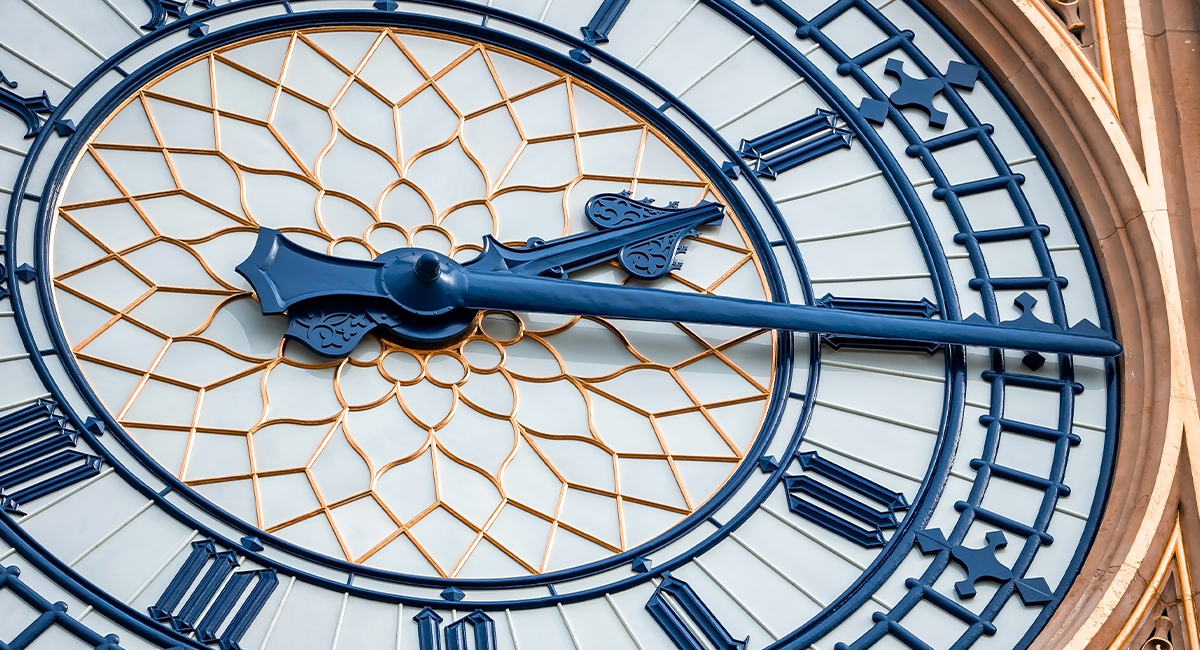

Time Is the Real Test

Courts are not interested in what a record looks like today. They are concerned with what it was, at the relevant moment in the past. That may be:

- the time of a clinical procedure

- the time of a test or certification

- the time a critical decision was made and recorded

The challenge is this: Most systems are excellent at showing current data. Far fewer are capable of proving historical integrity. That distinction sits at the heart of what might be called ‘temporal evidential integrity’; being the ability to demonstrate, with confidence, that data has not changed in any material way over time.

Modern regulatory frameworks increasingly rely upon large-scale, real-world data sources, including Registries, to support Safety, Performance, and Lifecycle Evaluation. And rightly so.

Such systems bring scale, longitudinal reach, and a form of population-level visibility that was previously unavailable. But they also introduce a quiet and largely unexamined assumption.

And that assumption is that data which is sufficient for Regulatory Purposes will also be sufficient when tested as Evidence in a Court of Law.

It will not.

For Courts do not evaluate datasets in aggregate. They do not take comfort from scale. Nor do they rely upon system outputs simply because they exist. They ask a far more exacting question: “What was this record – at the moment it was created – and can you prove that it has not changed?”

At that point, questions of System Integrity, Data Provenance, and Operational State cease to be background considerations. They become decisive. And it is precisely here that many otherwise sophisticated compliance and registry-based systems begin to encounter difficulty.

Without that ability, admissibility – and, if admitted, evidential weight – begins to weaken.

Quietly at first. Then decisively. Then irrevocably.

The record may still exist. But its evidential value is already in serious question.

How Integrity Quietly and Invisibly Breaks Down

One of the more uncomfortable realities in digital systems is that degradation is rarely dramatic. It is gradual. Subtle. Often invisible.

Systems do not need to “fail” in order to become unreliable as Evidence. But computer systems do “drift”. Here are just a few examples:

- Time Drift

If different parts of a system are not perfectly synchronised, records may become inconsistent while still appearing consistent — even if each was accurate when created.

- Structural Drift

Software evolves. Updates are applied. Data structures change. The data remains, but its legal meaning may not. It has been fixed neither in time nor in structure.

- Accumulated Change

Logs are rotated. Data is migrated. Storage formats evolve. Each step is individually reasonable. Collectively, they introduce evidential uncertainty.

None of this is visible in normal operation. All of it becomes visible under legal challenge.

The system continues to operate. Reports still generate. Audits are still passed. But the Evidential Foundation is slowly weakening. And no Dashboard will tell you that.

By the time these questions are asked, the opportunity to correct the system has already passed. An Application to have the Court not admit the data as Evidence is in train and cannot be interrupted

An Analogy Worth Considering – Think of the Digital as Analogue

In Decontamination Environments, the integrity of physical systems is well understood. A steriliser must be validated. Its seals must be checked. Its performance must be demonstrated, not assumed.

But Digital Systems are often treated differently. They do not leak. They do not rattle. They do not visibly degrade. But they are performing a similar function. One preserves the safety of instruments. The other preserves the integrity of the record.

Or put more directly: One keeps the Instruments safe; The other keeps the Truth safe.

For both require attention – both require validation. And failure in either case has consequences.

The Moment of Challenge

To understand the practical impact, consider what happens when Digital Records are tested in a Legal Setting. So let us consider a frequent circumstance…

Imagine your organisation (hospital, laboratory, manufacturer) is being sued by an active and well-motivated Claimant and it needs to rely on its digitally-recorded records in order to defend itself.

The Trial begins, but before the substance of the case is examined, a preliminary issue arises as the Counsel of your antagonist makes an application to the Court. That application is for the Judge to exclude your computer-generated records. This is a challenge of Admissibility.

The Trial stops and a focused Legal Argument begins. Attention turns not to what the records say, but to how they were created, stored, and maintained.

At that point, your system is put on trial. Questions follow:

- How was the system validated?

- How are changes tracked?

- Can your organisation prove your data has not been altered?

- What evidence exists that your system was operating normally at the time it created these digital records?

If clear and compelling answers cannot be given, the risk is not simply that the evidence is weakened. The risk is that it is not admitted at all.

At that point, the organisation may be left with no evidential foundation on which to defend itself. And these consequences are no longer abstract. They become organisational, financial, and – in some cases for those involved – personal.

From Data to Evidence

This leads to a distinction that is becoming increasingly important. There is a difference between: data that supports operations; and data that can withstand legal challenge.

Those two things are not the same. And in a legal context, the second is the one that matters.

Increasingly, organisations are beginning to recognise the need for what might be described as “Litigation-Grade Data – records that are not only accurate in practice, but demonstrably reliable under scrutiny.

Now this does not require abandoning existing systems; but it does require a shift in thinking. The question is no longer: “Are we recording what happens?”, but becomes: “Could we prove it – if we had to?”

A Quiet but Important Shift

As Digital Systems and their Computer-Generated Data become more central to Clinical, Technical, and Regulatory Processes; they are now becoming centric to the results of Litigation.

When that happens, their role changes. They are no longer just operational tools. They become “Evidential Instruments”. And Evidential Instruments are judged differently. They must prove integrity, continuity, and reliability – not as a matter of assumption, but as a matter of proof. And that proof must exist before the question is asked.

Looking Ahead

In many organisations, this shift, this reality, has not yet been recognised. Systems continue to perform well. Data continues to accumulate. Compliance frameworks continue to function.

But a question is beginning to surface – quietly, and sometimes most uncomfortably: “What would happen if this Data had to stand on its own in a legal context?”

That question does not demand an immediate answer. But it does lead naturally to the next stage in our conversation. Because once the limits of current systems are understood, attention turns to where risk may be most exposed – and what, in practical terms, can be done about it.

In the next Article, we move to more specific, and often overlooked, sources of Legal Risk: decontamination event records, laboratory testing records and certification records.

These are routinely treated as definitive. Relied upon. Rarely questioned. But when subjected to Evidential Scrutiny – they can exhibit many of the same vulnerabilities we have explored here.

The question we will address is a simple one, but it carries real weight:

When these types of computer-generated records are challenged – will they stand, or will they unravel into legal uselessness? The question is no longer theoretical. The question is: “What happens when that data is tested Legally in practice legal?”

As you read this series of Articles, please be apprised that by the last Article my intention is not to leave you the reader with just Questions. No – my intention is to leave you with Answers.

Disclaimer. The views and opinions expressed in this article are solely those of the author and do not necessarily reflect the official policy or position of Test Labs Limited. The content provided is for informational purposes only and is not intended to constitute legal or professional advice. Test Labs assumes no responsibility for any errors or omissions in the content of this article, nor for any actions taken in reliance thereon.

Get It Done, With Certainty.

Contact us about your testing requirements, we aim to respond the same day.

Get resources & industry updates direct to your inbox

We’ll email you 1-2 times a week at the maximum and never share your information